Transparent AI for Healthcare:

Strategy & Auditing

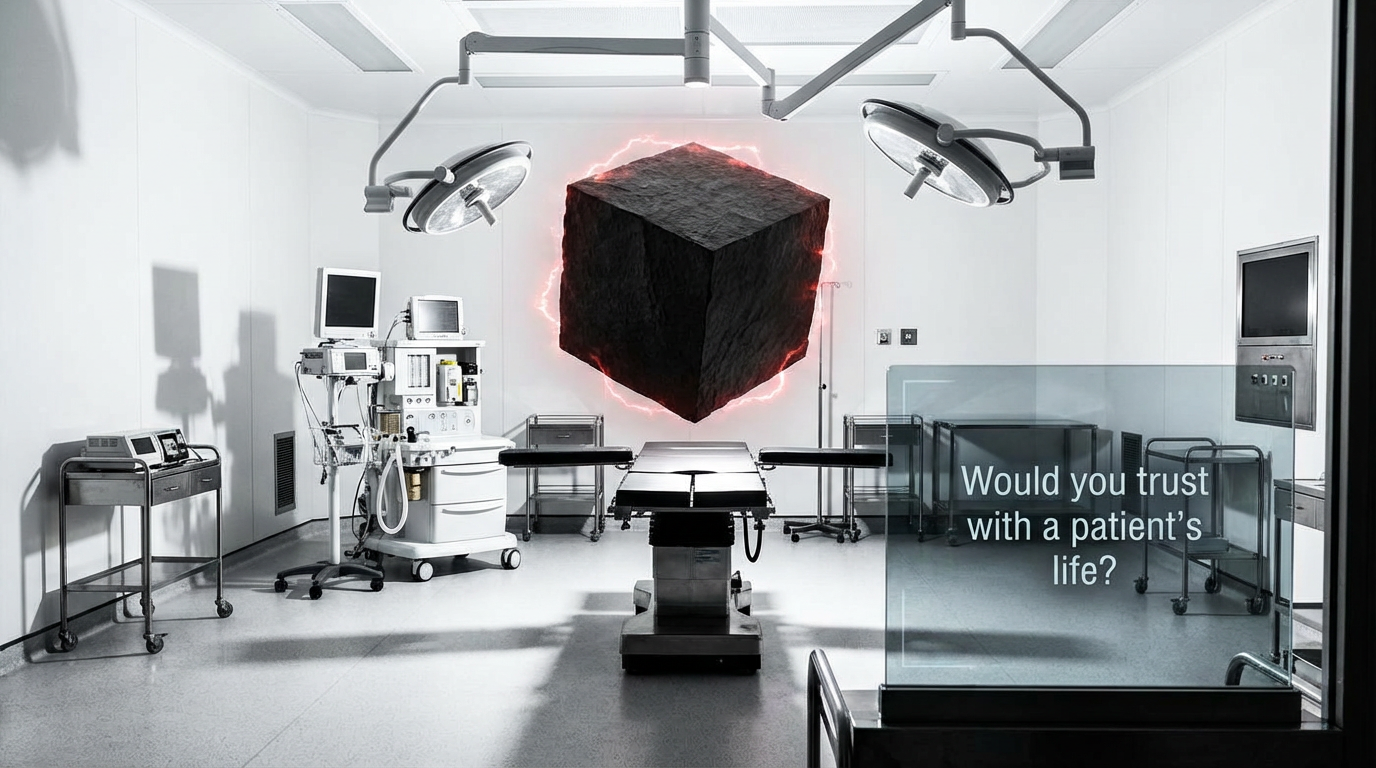

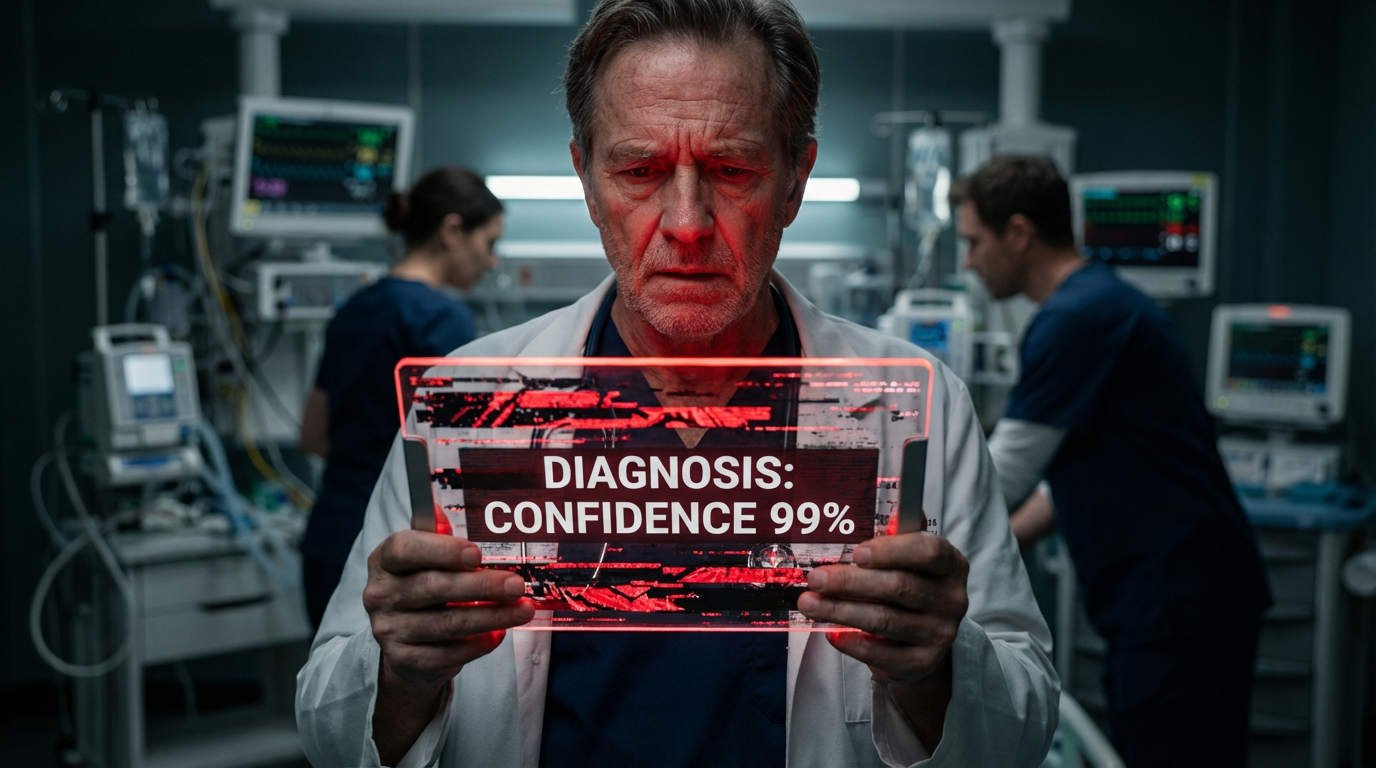

Don't let "Black Box" models become your liability. Learn the CodexCore methodology to build compliant, explainable, and trustworthy Medical AI.

- Audit & Compliance: Master FDA/EU AI requirements.

- Bias Detection: Visual tools to identify risks.

Join the Waitlist

Get early access + our free FDA/EU Compliance Checklist PDF.